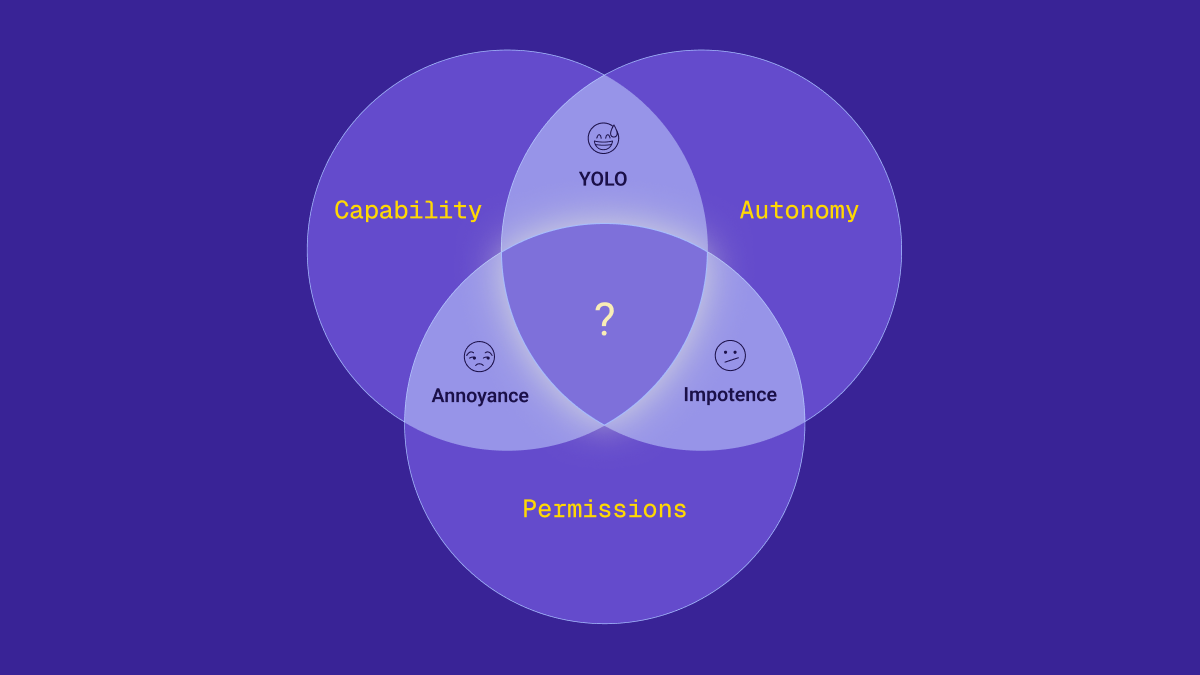

Every team deploying agents hits the same wall: Capability, Autonomy, Permissions — pick two. It looks like physics. It isn’t. The agent version of the CAP tradeoff is an infrastructure problem — permissions weren’t designed for non-human actors that operate at machine speed, can be tricked, and bear no consequences. That means it’s solvable.

The tradeoff every security team hits

Here's what I hear from every security team I talk to: we know we need agents. We know they're coming. And we know our current permissions will break under them.

Not because they're reckless. Because they don't have a choice. The board wants agents in production. The CEO is asking why they aren't shipping yet. And the security team is sprinting to keep up. But no matter how fast they move, they keep hitting the same wall: a tradeoff where they can pick two of three things they want and accept the third will suffer.

I've spent the past seven years building permissions infrastructure. One idea shaped how a generation of engineers thought about distributed systems: Brewer's CAP theorem. A distributed database can optimize for Consistency, Availability, or Partition Tolerance. Pick two. That tradeoff isn't a bug in any particular system. It's a structural property of how distributed computing works—network partitions happen, and no engineering can wish them away.

I see the same shape emerging with agents. Every organization I talk to is stuck choosing between three things they want simultaneously:

- Capability: the agent can access real tools, data, and systems.

- Autonomy: the agent acts without a human approving each step.

- Permissions: access is scoped, least-privilege, and auditable.

This isn’t a formal proof—it’s a model for thinking about the constraints teams actually face. In practice, you optimize for two. The third suffers.

You may not have been able to put this to words, but if you’re in security, you recognize this bind immediately. You've felt it. And you've probably concluded it's unavoidable.

The three modes

You're already in one of the following modes. So is every other team deploying agents. The question is which one—and what it's costing you.

Capability + Autonomy: Full self-driving

The agent has access to real systems and operates autonomously. You've trusted the algorithm to obey every rule, respect every boundary, and care about safety as much as you do. This is what happens when someone turns on --dangerously-skip-permissions in their coding agent and lets it run. No hands on the wheel.

And this isn't hypothetical. One recent scan found over 40,000 OpenClaw instances exposed to the internet, with community skills containing malicious instructions designed to exfiltrate data and harvest credentials. The agents had inherited permissions across their users' entire digital lives.

Autonomy + Permissions: Cruise control

The agent operates within strict boundaries and acts on its own, but it can't reach anything meaningful. Like cruise control on an empty highway — it holds a steady speed, but it can't change lanes, navigate an exit, or react to what's happening around it. Read-only access to a single system. Technically autonomous, functionally not that useful.

This is where most "safe" agent deployments live today—agents that exist in the org chart but can't actually do much. Impotent agents. Safe, sure. But no one deployed an agent to hold a steady speed on an empty road.

Capability + Permissions: Passenger seat with your teenage driver

The agent can touch real systems, but a human approves every action. You're riding shotgun, hands hovering over the wheel, approving every lane change. For a driving lesson, this makes sense. For a daily commute, it's unsustainable.

In production, it creates consent fatigue. The human stops reading the approval prompts and starts clicking "yes" reflexively — the same pattern that contributed to a 13-hour AWS outage. You've achieved the worst of both worlds: an agent that's slow because a human is in the loop, and unsafe because that human stopped paying attention.

This is where most coding agents are today. Claude Code, Cursor, Copilot—they all default to some version of human-in-the-loop. It works for vibe-coded demos. It doesn’t scale to production.

Every organization I talk to is stuck in one of these modes. Not because they made bad choices, but because they're running on permissions infrastructure built for a different world.

It's not your fault. It's the system.

Capability and autonomy improve with every model release. Opus, GPT, Gemini—the frontier keeps pushing forward. These axes are getting better on their own.

Permissions haven’t moved. We’re still running on a decades-old model designed for human users: roles assigned at onboarding, coarse-grained bundles that include far more access than anyone needs. Rarely revisited until someone leaves the company. Our data shows that 96% of a user's permissions go unused. Misconfigured access control has been the #1 vulnerability on the OWASP Top 10 since 2021—across every release.

The industry tolerated this for humans. We trusted employees to do the right thing — and there’s a natural limit on how much damage one person can do through a normal UI in an eight-hour workday.

That was an acceptable bargain.

But you know the assumptions behind that system no longer hold. Agents don't have judgment. They don't follow workflows out of professional discipline. They can be tricked by prompt injection. They operate at machine speed, at any hour, with no natural stopping point. An overpermissioned agent can exfiltrate, mutate, or destroy an entire system in seconds.

And yet.

CyberArk's research found that 45% of enterprises apply the same privileged access controls to AI agents that they use for human identities. Another 33% have no clear AI access policies at all. The default approach is impersonation: give the agent the same permissions as the user who triggered it. That hands a tireless, fast, non-accountable system the full blast radius of a human's access.

Right now, every organization is writing an AI policy: literally a document. The "security model" is to distribute it, train people on it, and hope for the best.

That's not a security model. That's a prayer.

Physics vs. infrastructure

The shape looks the same as Brewer's tradeoff: three desirable properties, a structural constraint that forces you to choose. But the underlying cause is different.

CAP in the database world is a physics problem. Network partitions happen, and no engineering can wish them away. The tradeoff is baked into reality.

The agent tradeoff is an infrastructure problem that we can actually address.

And here's what makes this one solvable: the core problem is overpermissioning, and for the first time, we have both the reason and the means to fix it.

For fifty years, everyone has agreed that least privilege is the right goal. For fifty years, we've done ~nothing about it—because doing it right for humans was impractical, and well, the juice wasn't worth the squeeze. You'd have to understand every person's job in detail, map it against every system they touch, grant and revoke access dynamically, and do it continuously. Nobody was going to do that. So we took shortcuts: broad roles, standing access, dreaded quarterly access reviews to check the box.

Agents change the calculus, because permissions weren't designed for non-human actors that operate at machine speed, can be tricked, and bear no consequences for their mistakes. The likelihood of getting it wrong has increased by orders of magnitude—and so has the cost. An overpermissioned human is a manageable risk. An overpermissioned agent is a breach waiting to happen. And that means there's finally a business-justifiable reason to fix it.

At the same time, we now have AI that can actually do the work humans never would. Analyze every permission and access log across your organization. Identify what's actually used versus what's been sitting untouched for years. Recommend reductions. Then monitor continuously—because you should assume you got it wrong—and attenuate at runtime based on what's actually happening in the session. Not a quarterly review. A continuous process.

What breaking the tradeoff actually looks like

The answer isn't better roles or more policy documents. It's permissions that shrink.

Everything in traditional access management expands over time. Roles accumulate, access creeps, permissions granted for a specific project persist long after the project ends. For agents, this is a loaded gun.

Automated Least Privilege flips the direction. Instead of permissions that expand over time, you scope down to what’s actually used, monitor every action in real time, attenuate access based on session context, and audit everything. Not a best practice pinned to a wiki. Not a line item in an AI policy document. Infrastructure.

Here’s what it looks like in practice. Say a developer kicks off a coding agent to refactor a service. The agent inherits that developer’s permissions—which, like most developers, include access to repos, secrets, and infrastructure they haven’t touched in months.

With the right infrastructure, that excess access never reaches the agent. The developer’s permissions have already been scoped down to what they actually use. Every tool call the agent makes is captured in real time—every prompt, every MCP server interaction, every response. Midway through the session, the agent tries to query the secrets manager. That’s anomalous for a refactoring task. Access narrows automatically, before the request completes. The security team gets an alert. The agent keeps working on what it was supposed to do—and can’t touch what it wasn’t. The full trail is there for the next audit. This is what we’re building at Oso.

The window is closing

Models will keep getting better. Organizations will keep adopting agents. The pressure to give agents real access to real systems will only intensify.

But capability and autonomy improving doesn't fix the permissions problem. It makes it more urgent. Every model improvement that makes agents more useful also makes overpermissioned agents more dangerous.

Most agents are still in pilots. This is your window. The teams that solve permissions now will ship agents. The teams that don’t will be writing incident reports explaining why they couldn’t.

Capability and autonomy are already here. Permissions are the last unsolved variable. If you think I’m wrong, tell me why—I'm @grahamneray on X and LinkedIn. And if you want to go deeper on how we're approaching this at Oso, that's here.

.png)